Using Open-Source LLM Development to Power Enterprise Generative AI Workflow Automation

The combination of Open-Source LLM Development and Generative AI Workflow Automation represents one of the most powerful and cost-effective approaches to enterprise AI available today. Open-source models provide the language intelligence; workflow automation provides the operational context and integration; together, they enable organisations to automate complex, document-intensive business processes at a cost and with a level of data security that cloud API-based approaches cannot match.

Why Open-Source for Automation

For workflow automation use cases, Open-Source LLM Development offers specific advantages over commercial API approaches. Automation workflows typically involve repetitive, high-volume processing — exactly the scenario where per-token API pricing becomes prohibitively expensive at scale. Running an open-source model on dedicated infrastructure converts variable API costs to fixed infrastructure costs, dramatically improving the economics of high-volume Generative AI Workflow Automation.

Matching Model to Task

A core principle of effective Open-Source LLM Development for automation is matching model size to task complexity. Document classification, information extraction, and structured data generation can often be handled by smaller, faster, cheaper models — reserving larger models for tasks that genuinely require broader reasoning. This model routing strategy is a key efficiency lever in Generative AI Workflow Automation architecture.

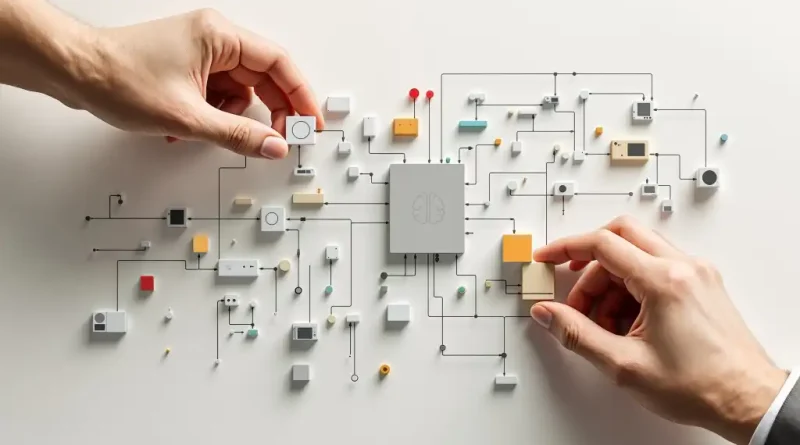

Building the Automation Pipeline

Effective Generative AI Workflow Automation is built on three layers: document ingestion and preprocessing, model inference, and output validation and routing. Open-Source LLM Development for automation should include robust error handling and confidence thresholds that route uncertain outputs to human review rather than passing them downstream unchecked.

Governance and Audit

Automated workflows using Open-Source LLM Development must maintain complete audit trails — documenting every AI decision, the inputs that drove it, and the confidence level associated with it. Generative AI Workflow Automation in regulated environments additionally requires model version tracking and performance monitoring to satisfy regulatory expectations around AI system governance.

Conclusion

Open-Source LLM Development is an ideal foundation for enterprise Generative AI Workflow Automation — combining data security, cost efficiency at scale, and the flexibility to customise models for specific automation tasks. Organisations that build this capability now will find that it scales in value as automation use cases multiply across their operations.